I've been saying this for the past two years. If you're not actively learning how to earn with AI, you're putting yourself in a bad position. And this isn't just about entry-level workers. I don't know why people keep trying to frame it like that, like this only hits the bottom rung and everyone else is safe. That's not what I'm seeing at all. This is going to affect everyone.

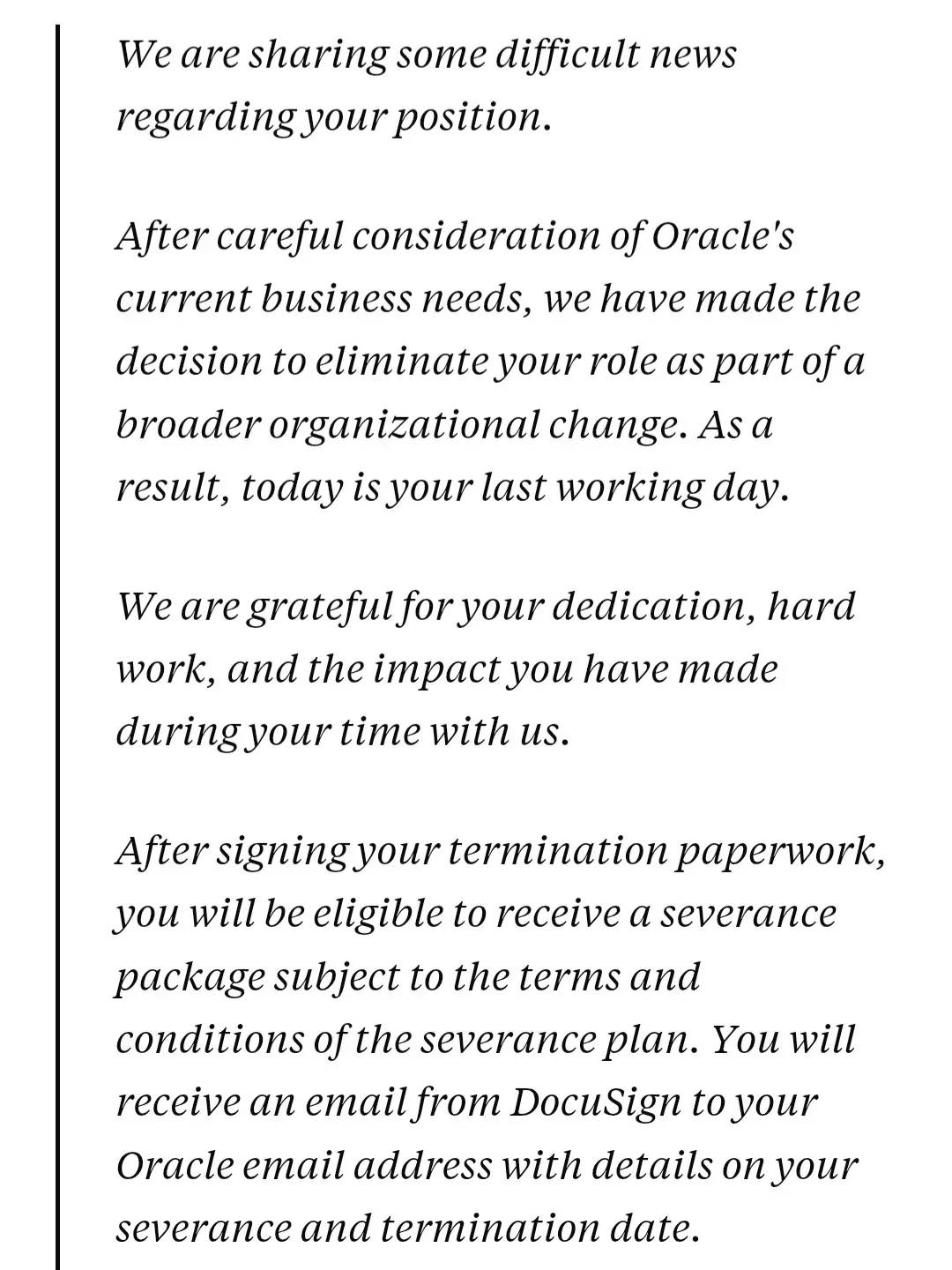

Oracle just cut 30,000 jobs. That's roughly 20% of its workforce. That's not a layoff. That's a structural reset. That's a company stepping back and saying they don't need as many people to operate anymore. And when you see numbers like that, you shouldn't brush it off like it's some isolated event or a one-time correction. It's not. It's a signal. It's showing you where this is going.

If you want to understand why, look at what AI has done in just the last year. Not five years. Not ten. One year. A year ago, image generation was interesting, but it wasn't usable for serious business in a lot of categories. Food photography is a perfect example. It was bad. You could spot it instantly. The textures were wrong, the proportions were off, the lighting felt fake, and if you tried to use it in a real campaign, it didn't hold up. Today, if it's set up correctly, you can't tell.

Same thing with writing. A year ago, AI was a helper. Fix grammar, reword a sentence, clean up a blog post. If you let it run on its own, the output was garbage. Generic, thin, robotic, obvious. Now that same category of system can go out, find a trending topic, decide what fits your business, write the content, publish it, and if it's tuned properly, it sounds like you wrote it. Clean. Natural. On voice.

That shift matters. Because when you combine that with automation systems and robotics, you're not talking about a tool anymore. You're talking about systems replacing layers of work. Real work. Skilled work. White-collar work. Creative work. Analytical work. Admin work. Legal work. Marketing. Operations. All of it.

And if you're not the one building those systems, you need to start asking yourself where you fit in the next few years.

I'll give you a real example. I built an AI lawyer. Not a gimmick. Not a toy. A real system. I paid for access to millions of pages of case law, federal law, and state law documents, and trained a model specifically for that use case. I also have lawyer clients, so I had real people to test it against.

We ran an experiment. A group of attorneys each drafted a contract based on the same criteria. Then I mixed all the contracts together and included the AI-generated one, and sent them back out. Each lawyer reviewed the set, except their own.

Two of them picked the AI contract. Not because it was worse. Not because it missed something. Because it had no flaws. It was too clean. That was the giveaway. Everyone else couldn't tell.

Now, to be clear, I'm not saying AI is walking into court tomorrow and arguing cases. That's not the point. But contract work, with the right setup, can absolutely replace a contract attorney. Fully. Today. Not eventually. Right now.

The reason systems like that aren't everywhere yet isn't capability. It's access, licensing, data rights, and gray areas. In my case, that law library license made public deployment questionable. So I didn't commercialize it. But capability-wise, that line is already crossed.

That's what people keep missing. They're talking about AI like it's getting close. In a lot of areas, it's already here.

So now the real question becomes how long before this becomes easier to build, cheaper to run, more accessible, more normalized. And when that happens, how many people are suddenly competing for work they thought was safe?

That's why this isn't just an entry-level problem. It's not just support reps, junior writers, or interns. This hits everything. Bottom, middle, and eventually a lot of the top. The speed of progress over the last year is honestly insane. I can't think of anything that's moved this fast.

And every time something like this happens, you see the same reactions. Companies should be taxed. Government needs to step in. Regulation is coming. No, it's not. Not in any meaningful way.

First problem is tracking. There's no clean way to measure all of this across industries. But the bigger problem is speed. AI is moving too fast. If a government study takes three months, it's already outdated before it's finished. The systems I was using a month ago aren't the same ones I'm using today.

So what exactly are you regulating? A snapshot that's already dead?

I saw a university ad recently promoting AI classes. On paper, that sounds smart. But by the time they build the curriculum, get approval, structure it, and teach it, it's already behind. Formal systems can't keep up with this pace.

And here's the part most people don't see. A lot of the real advancement isn't happening inside big companies. It's happening in small groups. Discord servers, Telegram chats, private communities. People building, testing, sharing, and iterating in real time.

One person figures something out. Another improves it. Another finds a use case. Another plugs it into a workflow. Another wraps an interface around it. And suddenly there's a system the public won't even hear about for a few months.

That's how this moves.

There are groups where people jump into voice chat, screen share what they're building, and break it down live. Use case, architecture, limits, where it breaks, how to improve it, where it can make money, what role it can replace, whether it should run local or cloud, whether it's got memory or retrieval or agents. That's where the edge is.

And that's why broad regulation is mostly fantasy. Governments can lean on big companies, sure. But how do you regulate thousands of small groups nobody even knows exist? You don't.

A lot of us are running local models anyway. On our own machines. Private. Customized. Nobody even knows they exist unless we say so. So how do you regulate that? You can't.

Trying to regulate this right now is like putting up a white picket fence halfway down a mountain and expecting it to stop a massive snowball already rolling. It's not happening. The movement is too fast, too distributed, and too private.

And while all of this is happening, companies are doing something people should really pay attention to. They're pushing AI adoption internally. Encouraging it. Tracking it. Rewarding employees who use it well.

Think about that. They're actively training people to become more efficient using systems that can eventually replace parts of their role.

It's almost a self-fulfilling destruction plan.

And people still think someone is going to step in and stop this. They're not.

So when you see a company like Oracle cut 30,000 jobs, you shouldn't treat it like a one-off decision. It's part of a pattern. And there are more coming. This is going to become more common, not less.

The people who think regulation will stop it are missing how this actually works. This isn't one tool. It's thousands of people building thousands of things at the same time. The public sees the polished version. The builders are already ahead.

So no, this isn't about panic. But it is about waking up.

If you're sitting there assuming policy, ethics, or public pressure is going to protect your role, that's a bad bet. If you think this only affects junior positions, you're wrong. If you're not learning how to use these tools, build with them, sell with them, automate with them, or generate income with them, I don't know where you think you're going to land when this really accelerates.

Because it already has.

The takeaway is simple. Figure out where you stand right now. Are you learning it, using it, building with it, or getting replaced by it? Because this shift isn't coming. It's already here. And it's not slowing down for anyone.